Suppose you’re writing an image editor and want to draw a checkerboard background behind the transparent areas of images. We can simulate this in a macOS Swift Playground with a translucent blue square being drawn over a checkerboard pattern:

import Cocoa

let kBoxWidth: CGFloat = 32

class CanvasView: NSView {

override func draw(_ dirtyRect: NSRect) {

let context = NSGraphicsContext.current()!.cgContext

// Draw background.

for y in stride(from: 0, to: bounds.height, by: kBoxWidth) {

for x in stride(from: 0, to: bounds.width, by: kBoxWidth) {

if Int(y / kBoxWidth) % 2 == Int(x / kBoxWidth) % 2 {

NSColor.lightGray.setFill()

} else {

NSColor.white.setFill()

}

context.fill(CGRect(x: x, y: y, width: kBoxWidth, height: kBoxWidth))

}

}

// Draw content.

context.setFillColor(red: 0, green: 0, blue: 0.8, alpha: 0.5)

context.fill(bounds)

}

}

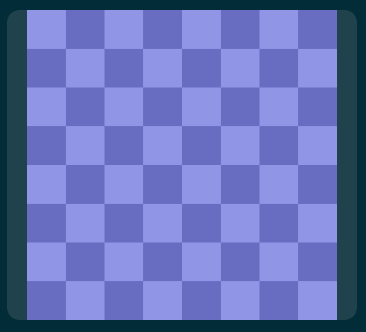

let canvasView = CanvasView(frame: NSRect(x: 0, y: 0, width: 256, height: 256))If you visualize the canvas view, it looks just like what we’d expect:

Now let’s imagine our image editor supports layers and lets users pick a blend mode for each layer. If we simulate this by placing a call to:

context.setBlendMode(.softLight)right after…

// Draw content.… you’ll notice the soft light effect is being composited against the checkerboard background, as if it were part of the image:

This is incorrect and misleading to the user since an exported version of the image (without the checkerboard pattern) would still just be a translucent blue square.

To fix this, we need more fine-grained control over what is actually being blended when we apply a blend mode. We can achieve this with transparency layers in Core Graphics. I tend to think of transparency layers as regions of the graphics state where anything drawn is oblivious to what came before it, even though they’re not exactly that. It’s probably best just to see an example.

In our Playground, we can simply begin a transparency layer right before we draw the content (the translucent blue square) and end the transparency layer before we return from draw(_:):

...

// Draw content.

context.beginTransparencyLayer(auxiliaryInfo: nil)

context.setBlendMode(.softLight)

context.setFillColor(red: 0, green: 0, blue: 0.8, alpha: 0.5)

context.fill(bounds)

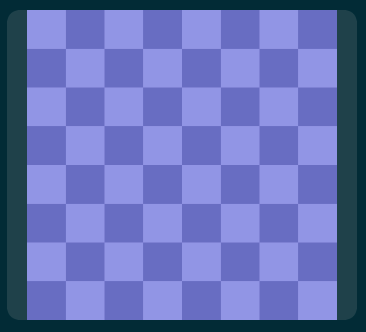

context.endTransparencyLayer()Now, Core Graphics will composite our rectangle as if the checkerboard background was not there, and that’s just what we’d expect:

Anything we draw in our transparency layer will be blended with anything else drawn in the transparency layer, but not with the checkerboard background pattern which came before it.